Cold emailing is a great way to reach out to prospects and build meaningful connections.

However, sending out emails into the void and hoping for the best is not enough. You must optimize different elements of your email to ensure they drive the desired results.

This is where A/B testing swoops in. It helps you navigate the wild, unpredictable skies of cold email outreach and perfect every element of the email to run successful campaigns.

In this blog, we'll tell you all about cold email A/B testing and how you can take your efforts from guesswork to data-driven success.

What Is A/B Testing for Cold Emails?

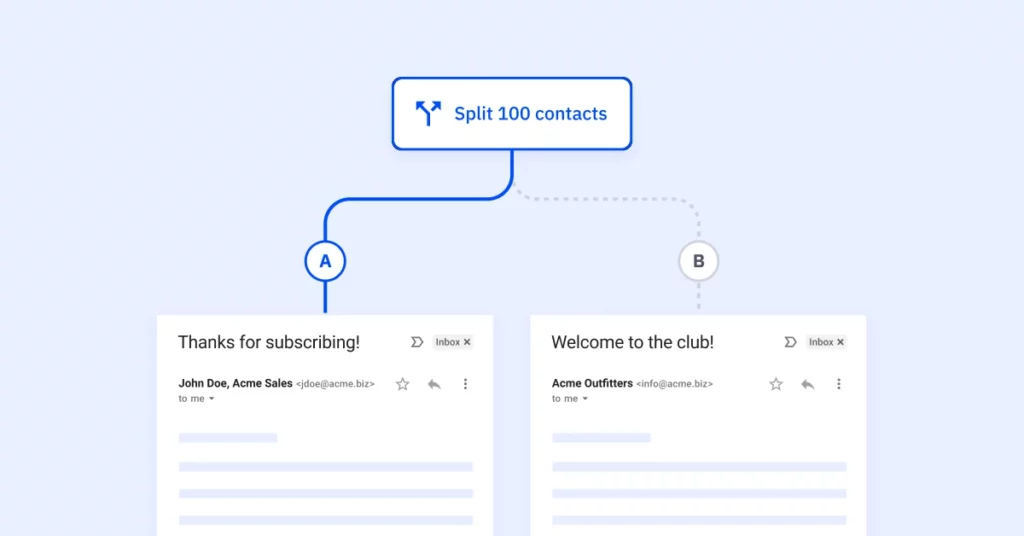

A/B testing, also called split testing, helps you compare different versions of something to determine which performs better. In the context of cold emailing, it involves sending two or more variations of an email to different prospect groups. These groups should be divided based on similar interests or characteristics.

You can experiment with different subject lines, CTAs, or entire email sequences. The idea is to determine which version does the best job.

But before you throw on your lab coat and safety goggles and start running experiments, here are a few guidelines to get you started on cold email A/B testing.

What Elements Should You A/B Test in Your Cold Emails?

Cold email A/B testing your cold emails is an effective way to discover what truly resonates with your audience. But first, you must determine what exactly you are looking to test. You can A/B test different elements of your email like:

- Subject Lines

Subject lines help you get your foot in the door. They form the first impression that determines if your emails get opened or overlooked. You can use different words, tones, or even emojis to see what works best.

- Email Content

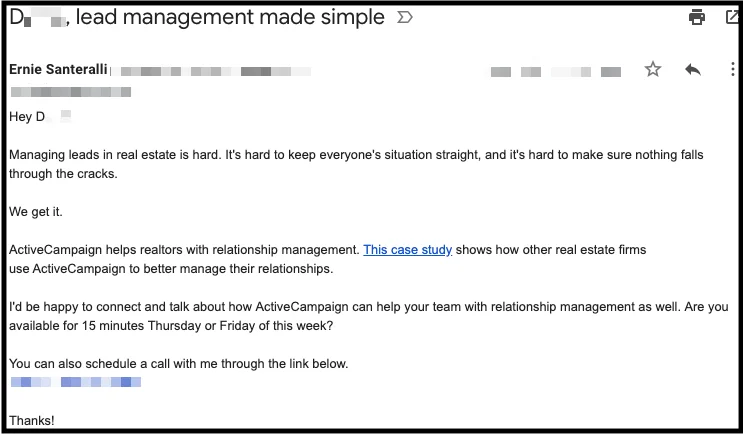

This is the heart of your message that conveys your value proposition, story, and CTA. When you write a cold email, you can experiment with the level of personalization, a storytelling approach, or being direct.

- Call-To-Action (CTA)

A CTA is a bridge that guides your prospect to the next step. You can experiment with variations that evoke urgency, curiosity, or empathy and observe which prompts the most clicks.

- Visual Elements

These are images, fonts, and colors that enhance your message and capture the reader's attention. You can test emails with and without visuals or experiment with different variations to see what works best.

- Email Signature

Your email signature is the final garnish that leaves a lasting impression and adds a professional touch. You can experiment with different formats or links to understand which style encourages more responses.

A/B testing these elements will help you enhance engagement and drive responses, ultimately improving cold email deliverability. To test these elements, there are 3 key metrics you can track:

- Open Rate

Open rate is the percentage of recipients who actually open your email out of the total emails you send. It helps you measure the effectiveness of your subject lines. A high open rate indicates your subject lines are pretty darn good and make people curious enough to open your email and see what's inside.

Playing around with different subject lines can help you improve the cold email open rate.

- Click Rate

Click rate is the percentage of recipients who click on a link or CTA within your email. It measures how engaged and interested your recipients are after they've opened your email. A high click rate suggests your email content and CTAs are really grabbing their attention and making them want to take action.

It could be visiting your website, checking out a product, or downloading a resource. By testing different CTAs, content structure, and link placements, you can understand what motivates your prospects to interact with your cold emails and improve your click-through rate.

- Reply Rate

Reply rate is simply the percentage of recipients who respond to your emails. It shows they're interested and want to keep the conversation going. A high reply rate indicates your email has struck a chord, resonating with them on a personal level. If you're looking to boost cold email responses, you can experiment with personalized content, catchy subject lines, and irresistible CTAs.

While each element has its own significance, there's one pivotal element that can help you maximize the impact of your cold email campaigns—the subject line. They are the gateway to your email and hold the power to capture your recipient's attention and unlock high open rates. Let's see how you can optimize your cold email subject lines through A/B testing.

A/B Testing Your Subject Lines

33% of recipients decide if they'll open an email just by looking at the subject line. This means if you don't nail this crucial element, a third of your prospects won't even bother opening your email.

A well-crafted, catchy cold email subject line can be the difference between your email being opened with anticipation or being dismissed without a second thought. A/B testing your subject lines will help you understand what really clicks with your audience. Here are some ideas you can try to test your subject lines:

Personalizing the Subject Line

In a world of generic content and spammy emails, personalization stands out like a sparkly gem. A cold email subject line that addresses your recipient by name or mentions their company can create an immediate connection. You can split test your subject lines based on the level of personalization. Alternatively, you can create two variations—with and without personalization to see which one drives better open rates. For example:

With and without their name

A: [Recipient's name], increase your website traffic by 10X

B: Increase your website traffic by 10X

With and without their company name

A: Effective lead generation techniques

B: Effective lead generation techniques for [company name]

With and without their role/position

A: 5 unique ways to generate more leads

B: 5 unique ways to generate more leads every [marketer] should know

Length of the Subject Line

Brevity and curiosity are the secret weapons of a killer subject line. Short subject lines build up the mystery, intriguing the recipient to open the email and learn more. But longer subject lines can provide more context, assuring the recipient that the email is relevant to them. A/B testing the length of the subject line can help you gauge which resonates better with your audience, leading to more opens and higher engagement. For example,

A: Got a minute, [prospect name]?

B: Here's how you can solve [pain point] and drive better results

Using Lower Case

Do you, like most sales reps, lean towards using title case in your email subject lines? It might not always be the best approach. On the other hand, a subject line in all lowercase letters can stand out in your prospect's inbox and come across as casual and friendly. You can test different cases in your subject lines to see which is more effective. Sometimes, even a tiny shift in style can significantly improve your open rates. For example,

A: Automate Your Marketing in Less Than a Minute

B: Automate your marketing in less than a minute

Utilizing Power Words

Certain words have the power to evoke emotion and curiosity. They can pique the recipient's interest and drive action. You can experiment with different power words in your subject lines to trigger different responses. For example,

- Words like "elusive," "priceless," or "little-known" can make your prospects curious.

- Words like "certified," "case study," and "tested" can help build trust.

- Words like "amazing," "brilliant," and "mind-blowing" can appeal to their sense of vanity.

- Words like "simple," "painless," and "easy" can appeal to their desire for simplicity.

- Words like "beware," "dangerous," and "toxic" can evoke fear in their mind.

Asking Questions

Curiosity is a powerful motivator. Asking a question in your subject line invites the recipient to seek an answer within your email. You can A/B test subject lines that present a question versus those that make a statement. This will help you assess which approach generates more opens and better engagement. For example,

A: Sales qualified leads for you.

B: Looking for sales-qualified leads?

Email Content A/B Testing

Once you've nailed down your subject line, it's time to dive into the heart of your cold email—the actual content. Testing different versions of your email content helps you tweak the message and boost the likelihood of getting a meaningful response. Let's look at some key strategies to ensure your emails are not only opened but also leave a lasting impression.

Preview Text Variations

The preview text is like a tiny trailer for your email's content. It is the second thing your recipient sees after the subject line. However, sales reps often overlook it. If you don't include the preview text, it will display the beginning of your email by default. But it can impact the recipient's decision to open your email or let it slip by. So, don't ignore this feature, and test different variations to encourage the reader to click. You can highlight a pain point, include data, or showcase a benefit. For example,

A: Discover the secret to boosting your sales in 2023

B: Don't miss out on our limited 20% discount

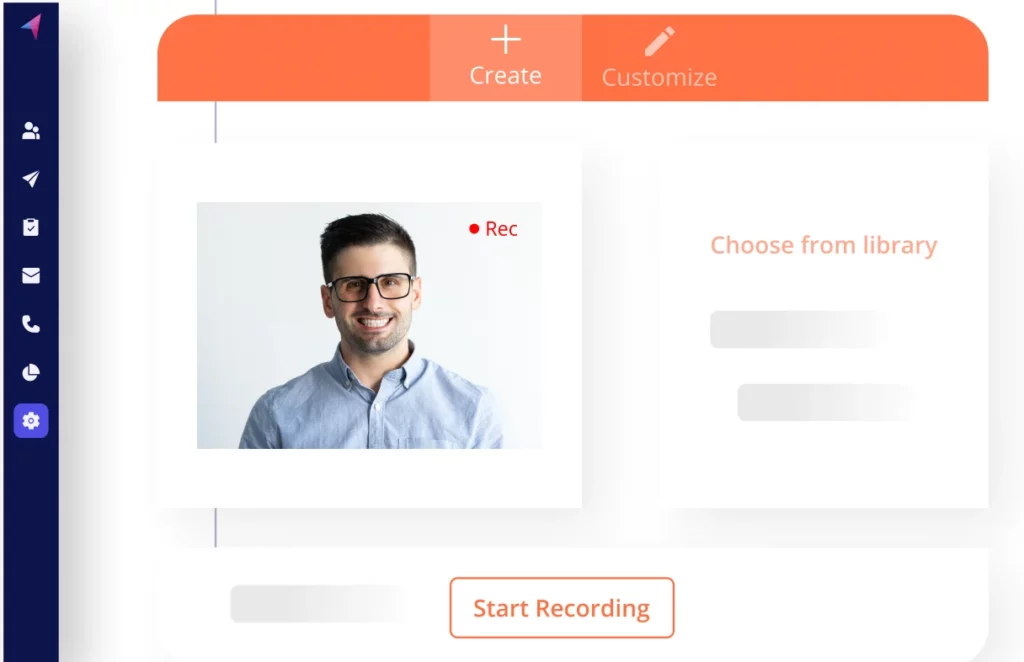

Use Images, GIFs, and Videos

Visuals can jazz up your email and make your content more interesting. But make sure they're relevant. You can A/B test emails with and without visuals or play around with GIFs and short videos to convey your message more dynamically. This will help you gauge which multimedia elements drive higher click-through rates and overall engagement.

Pointing Out Pain Points

Addressing your recipient's pain points in your email shows you understand their challenges. But does it work better than highlighting solutions and benefits? A/B testing can help you find the answer. You can experiment with addressing pain points versus emphasizing benefits or even different styles of conveying the same message.

A: Are you struggling with low website traffic?

B: We can help you increase your website traffic with our proven strategies.

Experiment With Links by A/B Testing

Adding links to cold emails is a common practice. You can use them to send the recipient to a relevant blog, prompt them to download a resource, visit your website, check your calendar availability, and more. If done correctly, these links can help boost your cold email click-through rates. Here are the different variations you can try:

Number of Links

The more links you add to your cold emails, the more spammy you'll come across. Plus, they'll overwhelm the recipient with too many choices. It is generally advised not to use more than 3 links in an email. But you can experiment with just one link. Think of the goal you want to achieve with the email and use a link that supports it. Plus, it is less distractive and keeps the prospect focused.

Using Various Anchor Texts

The words you use for your link's anchor text can influence the recipient's decision to click. A/B test different anchor texts that highlight benefits, spark curiosity, or give clear directions. This will help you analyze which anchor text drives higher click-through rates and better engagement. For example,

A: Learn more about our services

B: Discover how we can transform your business

Changing the Link Destination

Where a link leads can greatly impact engagement. A/B testing helps you mix things up and see which link destinations hit the right spot with your audience. Try sending recipients to a landing page, a blog post, a video, or a product page. You can then measure the click-through rates to understand which destination drives more action. For example,

A: Link directed to a blog post with industry insights

B: Link directed to a video explaining your product's features

A/B Test Your CTAs

One metric to measure the success of your cold email campaigns is the number of clicks your call to action(CTA) button gets. It acts as a guiding star, helping you steer your recipients toward the desired action. Therefore, it must be inviting and convey the objective clearly. You can experiment with different dates and times, intriguing questions, or valuable resources. Here are some A/B testing approaches for your CTAs:

Testing Specific Dates and Times

If the goal of your cold email is to schedule a call or a meeting, provide a specific date and time. It reduces the mental processing power needed for the prospect to reply to the email. All they need to do is respond with a simple yes/no/alternate date in this case. Alternatively, you can ask them for their preferred date and time to show you are flexible. For example,

A: Are you available for a 20-minute chat on Sep 10 at 3 p.m. EDT?

B: What is a suitable time to speak to you tomorrow for 15 minutes?

Value-Driven CTA Approach

You can use the CTA to tell the recipient how they'll benefit from taking the action. It can help you connect the dots between different parts of your email and summarize it in one simple and distilled benefit statement for the prospect. Running A/B tests with different value-driven CTAs will help you identify the one that drives the highest response rates. For example,

A: Can we get on call this Friday at 10 a.m. to discuss this?

B: Can we get on call this Friday at 10 a.m. to discuss how we can help you generate a more qualified pipeline?

Share Something Valuable

CTAs that promise to share valuable resources can pique the recipient's interest. You can offer downloadable guides, white papers, research reports, or eBooks. But should you share these right away or ask for the recipient's permission to start a two-way conversation?

On the one hand, sharing the resource directly shows you genuinely want to help the prospect without expecting anything in return. On the other hand, if you can get the prospect to respond to a smaller request, chances are they'll be more likely to say yes to something bigger down the line. A/B test both these variations to discover the best approach. For example,

A: Do you mind if I share a 5-minute video of how we can help you improve your outbound lead generation?

B: Here's a 5-minute video of how we can help you improve your outbound lead generation. Is this something you would be interested in?

Confirmation Question vs. Open-Ended Question

How you phrase your CTAs’ can also influence how the recipient responds to it. You can use a confirmation question that requires the prospect to respond with a yes/no. Or you can pose an open-ended question that prompts them to share more. Here's an example:

A: Is this a problem that you are facing currently?

B: What is the biggest challenge holding you back from reaching your revenue goals?

A/B Test Your Email Sending Time

Timing your cold email is important. It helps you ensure your carefully crafted messages don't just land in your recipient's inboxes but also capture their attention at the right time. A/B testing the day and time of sending your emails will help you optimize engagement and maximize the impact of your campaign. Here are two critical elements you must test:

Test Across Different Times

What is the best time to send a cold email? Would we even need an A/B test if we had a definitive answer? Research suggests not one but 3 best time frames to send cold emails:

- between 7 and 8 a.m.

- between 11 a.m. and 12 p.m.

- between 4 and 5 p.m.

The best way to figure out the optimal time for sending cold emails is to A/B test them by scheduling them to be sent out at different times. For example, create two groups of prospects from the same time zone and schedule the same email to be delivered to them in two different time frames. Then, track your open rates to determine which time works best.

Test Different Weekdays

Just as there are conflicting narratives on the best time to send emails, there are multiple studies on the best days to send them.

Is it on a weekday or a weekend?

If it's a weekday, then is it on a Monday, Wednesday, or Friday?

In our experience, cold emails sent to senior management/CXOs have better open rates on weekends. But don't expect the same from millennials or Gen Z workers. Run a few A/B tests to find the best day to email your prospects.

For example, create two groups of prospects from the same time zone. Schedule the same email to be delivered to one group on a Thursday and the other on a Saturday. Continue until you've covered all days and fund the most optimal option.

Best Practices for Running Cold Email A/B Tests

A/B testing different elements of your cold email can help you improve the success of your cold email campaign. But before you run off to start testing, you must be aware of the four critical best practices. After all, a poorly performed A/B test will not only waste your time but also generate dodgy results. Here are the essential guidelines you must follow:

1. Choose Only One Variable to Test

To accurately understand the impact of a specific change, focus on testing only one element at a time. Whether it's subject lines, email content, or CTAs, focusing on only one variable helps you pinpoint the exact factor that is affecting engagement. This gives you clarity and enhances the reliability of your results.

2. Ensure Consistent Conditions

Maintaining consistency across your tests is absolutely crucial. You must ensure that all other factors remain the same between your versions—from recipient demographics to the time of day (unless, of course, you're experimenting with different time frames). Why? Because it prevents external variables from messing with your results. This way, you can accurately attribute any differences to the specific element you're testing.

3. Have a Large Sample Size

A large sample size is statistically significant. It provides more reliable data and gives a clear picture of how your changes impact engagement. A small sample may give you misleading results that don't accurately represent the overall behavior of the recipients.

4. Keep the Test Duration Long Enough

A/B testing requires a sufficient duration to capture reliable trends. Don't rush to make a decision based on a few days' worth of data. Be patient and give enough time ( maybe a few weeks or even months) for a large chunk of recipients to interact with your emails. That way, you'll get some solid, meaningful data to work with.

Improve Your Cold Email Success Rate With A/B Testing

Every click, open, and response contributes to the success of your cold email campaigns. Cold email A/B testing is a powerful weapon that helps you achieve this by sculpting your outreach strategy with precision. It allows you to tweak every little element like the subject line, email content, CTA, sending time, and more to supercharge the impact of your emails and ensure they hit the bull’s eye every time.

With Klenty's cold email A/B testing feature, this process becomes streamlined and seamless. It is a cold emailing software that empowers you to test, analyze, and adapt, all while amplifying the impact of your outreach campaigns. Klenty equips you with data-driven insights so you can optimize your cold emails and drive results.

Resources You'll Love

FAQs

What should I A/B test in an email?

- Subject lines

- Email content

- Links

- CTAs

- Sending time, and more

What are the benefits of performing cold email A/B testing?

- It gives you data-driven insights about what works best for your audience, helping you make informed decisions.

- It helps you optimize your cold emailing strategy, driving open, click-through, and response rates.

- It helps you better understand your audience's preferences, behaviors, and pain points.